Yes, actually

Does discovery-based instruction enhance learning?

I had some great comments on last Friday’s post about the everybody-else-is-wrong article by Kirschner et al. One of them, by George Lilley, led me, via a wonderful webinar by Rachel Lambert, to an article by Alfieri, Brooks, Aldrich, and Tenenbaum whose title is the subtitle of this post. Its answer, surprisingly, is yes. I say surprisingly because, as Rachel Lambert explains, this article is cited in support of explicit instruction.

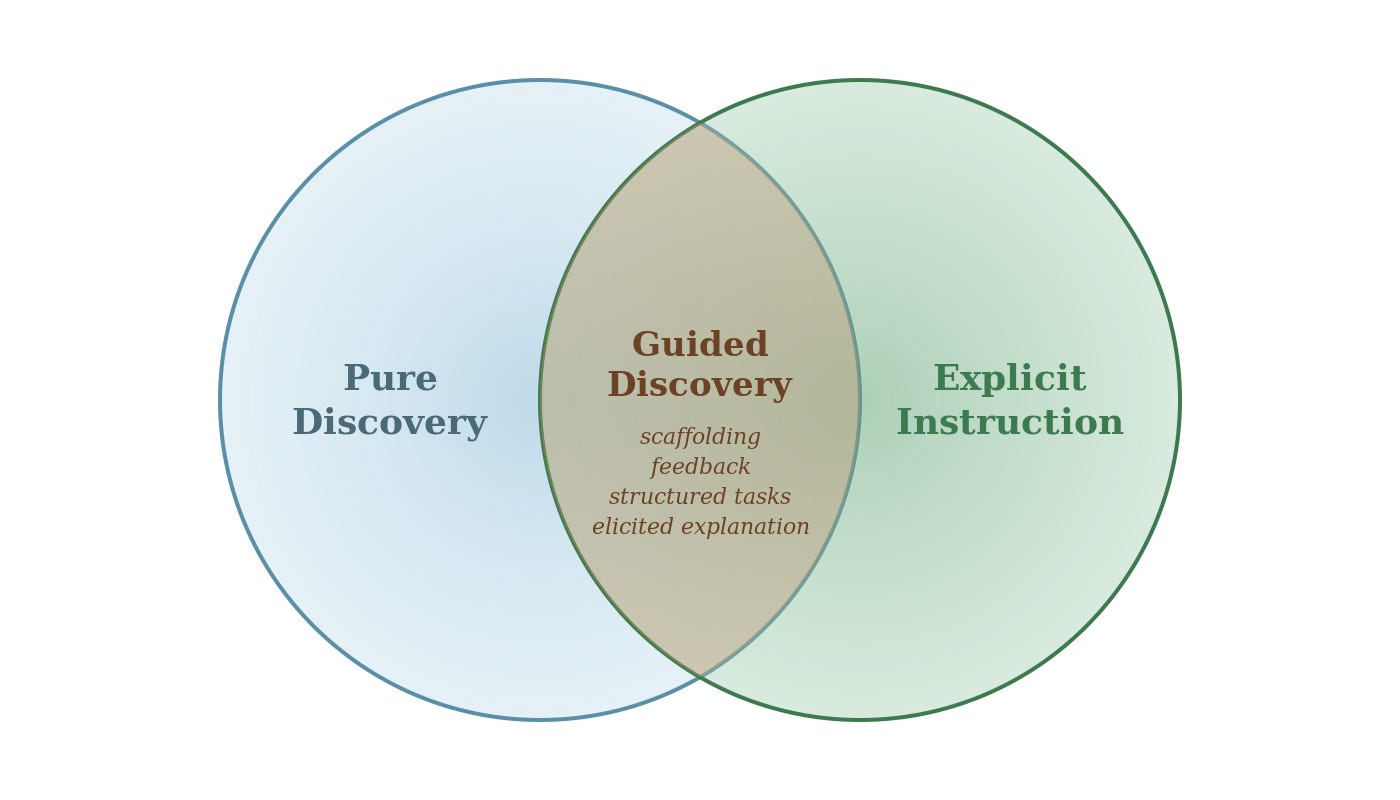

Part of the problem in this debate is that people are throwing around terms without precise definitions (see “minimally guided instruction”), so I appreciated this article’s attempt to do that. Alfieri et al is a meta-analysis of 164 studies covering math, computer skills, science, problem solving, physical/motor skills, and verbal/social skills. They ran two different analyses. The first one compared unassisted discovery learning with explicit instruction,1 and explicit instruction won. I assume that’s the result the proponents of explicit instruction like to point to. As I said last time, I don’t find this result surprising—and I don’t know any math curriculum that advocates unassisted discovery. Most curricula assume the teacher will do something other than sit around watching kids magically acquire knowledge.

The second study compared enhanced discovery with other forms of instruction, and this time enhanced discovery won. One form of enhanced discovery, guided discovery, won big, with an effect size of 0.5 overall and 0.29 for math.2 So yes, actually, discovery-based instruction does enhance learning.

Enhanced discovery versus other forms of instruction

Each of these categories is divided into subcategories, and the details are both interesting and a little confusing. I’ll give the details here and then try to draw some conclusions, particularly for math. Enhanced discovery is divided into

generation, where learners “generate rules, strategies, images, or answers to experimenters’ questions,”

elicited explanation, where learners “explain some aspect of the target task or target material, either to themselves or to the experimenters,” and

guided discovery, which involves “either some form of instructional guidance . . . or regular feedback to assist the learner at each stage of the learning tasks.”

The other forms of instruction to which enhanced discovery was compared include

direct teaching, “explicit teaching of strategies, procedures, concepts, or rules in the form of formal lectures, models, demonstrations, and so forth and/or structured problem solving”

feedback, in which “experimenters responded to learners’ progress to provide hints, cues, or objectives”

worked examples, which “included provided solutions to problems similar to the targets”

explanations provided, where “explanations were provided to learners about the target material or the goal task.”3

The authors were able to break down the overall effect for enhanced discovery into effects for each of its subcategories. Here generation on its own fared poorly (–0.15), which I don’t find surprising because it sounds a little close to unguided discovery. Elicited explanation fared well (0.36) and guided discovery fared very well (0.5).

On the “other instruction” side the study was not quite powerful enough to break out effect sizes, with the exception of worked examples, which on its own fared equally with enhanced discovery. This agrees with the findings I discussed last week. Thus, taking out worked examples would leave the other categories, including direct teaching, even worse off compared to guided discovery.4

Who’s doing the talking?

Explanations occur on both sides of the comparison, but there is a crucial difference. Under enhanced discovery we have elicited explanations, where students are explaining their thinking; on the other side we have explanations provided, where teachers are telling students what to do. In the fog of words people use, one thing that emerges fairly clearly from the proponents of explicit instruction is that they are pushing the traditional “I do, we do, you do” approach to teaching where teachers talk first, then the class discusses, then students work individually.

This research study does not support that position. Its evidence points more to having students try things and explain their thinking, with the teacher providing guidance and following up with a synthesis. There is still a role for explicit instruction in such an approach, but it doesn’t come first.

What does this mean for math?

An interesting aspect of both studies in this paper is that the effect sizes are smaller for math than for other subject areas. For the first study, unassisted discovery versus explicit instruction, we have an effect size of −0.16 for math, notably the smallest negative effect of any domain: compare science at −0.39, problem solving at −0.48, and verbal/social skills at −0.95. So even in the “discovery loses” meta-analysis, math was the domain where discovery came closest to holding its own.

In the second study, enhanced discovery versus other instruction, the effect size for math was 0.29—again on the lower end compared to other domains.

To me this suggests that math is a domain where instructional design quality matters most, which is an argument for a careful middle-ground approach to math instruction rather than extreme positions.

What does this mean in the classroom?

Most of the studies in this analysis were small-sample lab experiments, not year-long or multi-year studies of curricula, which is relevant to how far you can extrapolate these findings in the debates about what should happen in the classroom. I’m going to pick up on some of the classroom studies in future posts. To repeat the caution from Adding it Up:

. . . the effectiveness of mathematics teaching and learning does not rest in simple labels. Rather, the quality of instruction is a function of teachers’ knowledge and use of mathematical content, teachers’ attention to and handling of students, and students’ engagement in and use of mathematical tasks.

What I do take away from this study is that good classroom practice involves students exploring problems and explaining their thinking, and teachers providing feedback and guidance. Sounds a lot like problem-based instruction to me.

I’ll use italics when I introduce the exact terms used in the article.

That is, the guided discovery group was 0.5 standard deviations above the other group, meaning the average person in that group did better than about 70% of the people in the other group.

There was also a coding for baseline conditions, which separated out studies where the comparison group received essentially no instruction—they either did an unrelated task for the same amount of time, or simply took pre- and post-tests with a time gap. In other words, studies asking “does discovery beat doing nothing?” rather than “does discovery beat other real instruction?” And there was also a coding for other, a miscellaneous bunch of studies that didn’t quite fit the other categories.

I found the inclusion of feedback in the “other instruction” categories a little confusing, because elsewhere in the paper the authors say “Enhanced-discovery methods include a number of techniques from feedback to scaffolding.” Rachel Lambert’s interpretation of this tension is that studies were coded under feedback if that was the defining feature of the instruction; when feedback was accompanied by other treatments the study was coded under enhanced discovery. Without access to the actual codings for individual studies it’s not possible to say for sure.

Worked examples rating highly is unsurprising too! But I’m shocked at how infrequently I see this instructional move among primarily direct instruction math teachers. I love working examples with students (either after they have grappled with a concept, or just for procedures that are unintuitive). They get to hear my thought process, and I try to show several methods on the same problem.

I appreciate the distinction between teachers telling students explanations versus guiding students to explain concepts or solution paths themselves. It’s not surprising to me that teachers just telling explanations doesn’t perform well in the research because students are passive listeners. I think the interesting follow up, though, is how teachers maximize the student discussion. I have tried to explore this in my book in terms of the types of questions teachers ask when debriefing a task, but as a coach I’ve always found this to be the piece teachers struggle with most and I would imagine that how we engage students in these types of discussions matters for learning outcomes.